If ChatGPT were a coworker and we were sitting across from each other in a conference room, working through one of my projects, and there happened to be an HR rep in the corner who also held a behavioral psych doctorate, I imagine they’d be very quietly checking boxes on an ADHD assessment form. For both of us.

Not because either of us can’t think. Quite the opposite. Because the conversation would keep doing this strange dance where I’d try to steer toward the big picture, the structure of the site, the emotional arc of what I’m building, even the point, and ChatGPT would suddenly become deeply invested in one rule, one file, one microscopic technical detail. And I’d be leaning forward saying, “Yes, that matters, but not right now,” while also realizing that, as a kid, I was the one who needed someone else to say that to me.

The reason I recognize this pattern isn’t theoretical. I’ve lived inside it.

When I was young, they called it ADD. I don’t remember when the H got added or whether the terminology just shifted over time, but I do remember this being explained to me. Adults were always trying to describe my own mind back to me like it was a machine I happened to be operating without the manual. Most of them just didn’t get it. I lived in my own brain, and even though I didn’t have the vocabulary to explain it to anyone, or even to myself, I knew BS when I heard it.

One doctor, though, and you’ll see why he’s memorable, held up his finger between us like it was a diagram. He tapped the first knuckle and said, “This is where most people’s energy lives.” Then the next knuckle. “This is what caffeine does to them. It brings them up a level.” Then he pointed to the very tip. “This is about as much as the human brain can handle.” Then he looked at me and said, “You already live up here.”

According to him, caffeine didn’t wake me up. It pushed me past the peak. My brain, unable to stay that activated, slid down the other side into something that looked like calm. I don’t know how neurologically precise that explanation was, but the image stuck. My mind not sitting where other people’s did. Too much signal. A lot of noise. Definitely not enough control.

I was, and still am, very good at coming up with ideas and building the framework for them. But when I was young, and didn’t yet have any tools to manage myself, the pattern was predictable. I would start something. That would spark a new idea, so I’d start that. Which would spark another idea, and I’d start that too. Each beginning felt important, urgent, alive. Meanwhile, nothing was getting finished.

That was the real issue. I wasn’t short on ideas. I was short on landing gear.

Fortunately, I’m also quite smart, so learning in school came easily to me. A teacher would present an idea and I’d absorb it. I rarely had to study, which felt like an advantage at the time. It turned out to be a problem later, especially in college.

In college, and without any professional guidance, because I never met another doctor like the one I saw when I was seven, I had to figure things out on my own. What I learned was that if I paired a secondary interest with the primary task, I could hold my attention long enough to get through it.

In Literature 201, for example, there was a very pretty girl who asked if I wanted to study with her in the poetry section of the course. Well, I like pretty girls. She seemed sweet, so I did my best to study with her. I was on my best behavior, believe me. In the process, I learned poetry better than I ever would have otherwise.

As it turned out, she had a boyfriend, of course. But we both got A’s on the midterm, so all was well.

Pairing attention with interest became a recurring strategy. Sometimes that interest was academic. Sometimes… less so. But the principle was the same: give my brain a reason to stay.

I didn’t know it at the time, but I was reverse-engineering my own attention system. No one handed me a plan. No therapist walked me through executive function strategies. I just knew that if I waited around for focus to show up on its own, nothing important was going to get finished. So I started building ways to hold myself in place.

It wasn’t elegant. It was practical. I learned to break work into pieces small enough that my brain wouldn’t bolt. I learned to give myself reasons to stay, whether that was a person, a deadline (deadlines were particularly challenging), or the simple satisfaction of checking something off a list. I learned that starting was easy, but finishing was an act of will, and sometimes an act of trickery.

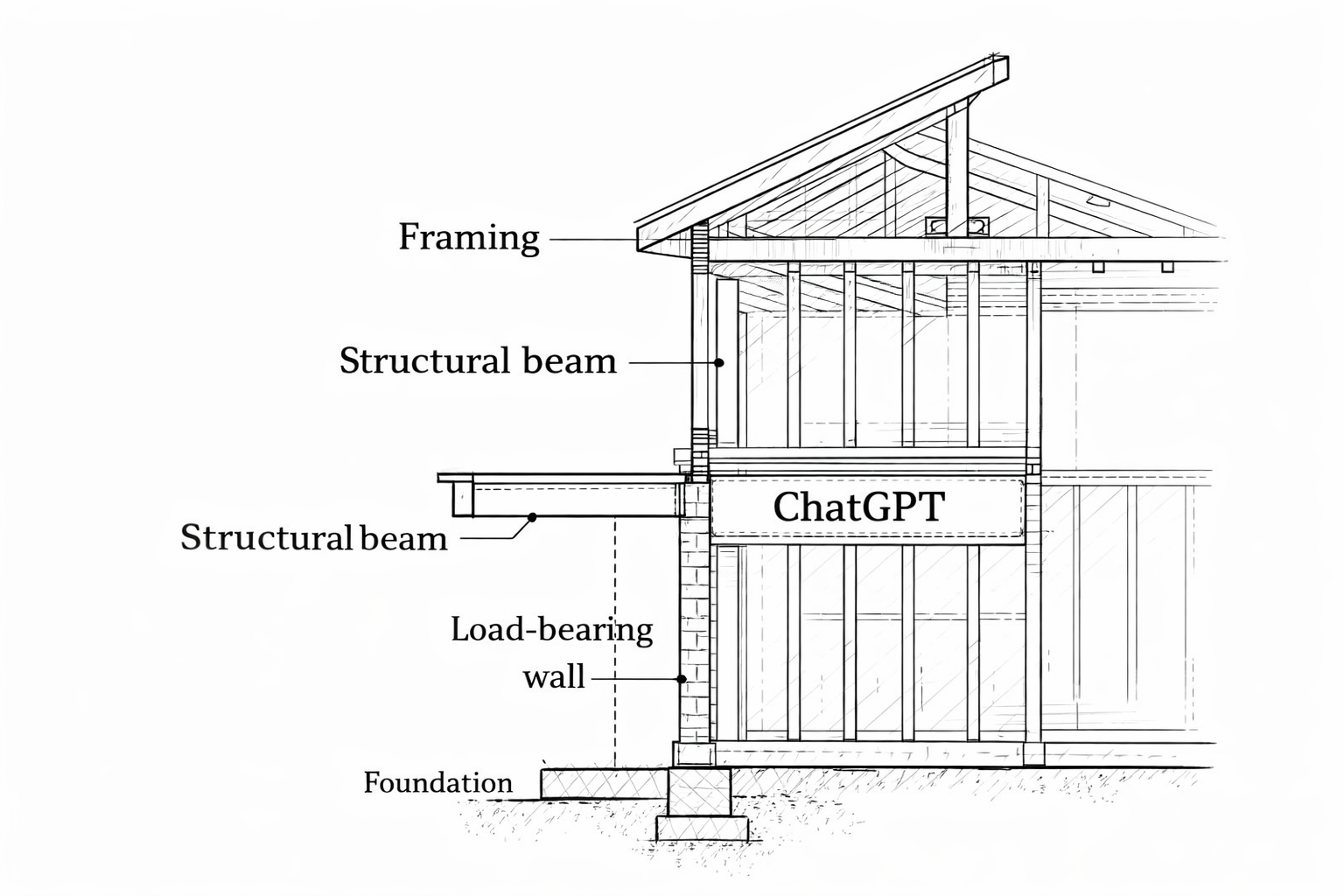

Focus, for me, wasn’t a switch. It was scaffolding.

Over time, what people thought was “natural concentration” was really construction. Habits stacked on habits. Rules I made for myself because no one else was going to sit over my shoulder and say, “Stay here. This is the point.” I had to become that voice.

Later in life, another label entered the picture. A therapist seeing me for reasons I’m not going into described it as obsessive-compulsive personality traits, and he was careful about the wording. Personality, not disorder. A style of operating, not something broken. Not the intrusive-thought kind people usually think of, but the kind that turns lists into lifelines and unfinished tasks into mental static. If my early years were defined by ideas without landing gear, this was the phase where landing gear became non-negotiable. Structure and completion weren’t preferences. They were how I kept the wheels on.

It wasn’t about neatness or perfection. It was about control. About making sure things actually got done. Hyperfocus stopped being something I stumbled into and became something I relied on. The structure I had built out of necessity turned into the framework that held everything together.

AI came into my life at a time when I didn’t have the kind of human collaborators who matched my vision, my intensity, or my desire to work on the kinds of projects I was building. Out of a mix of curiosity and a little desperation, I started using ChatGPT. It was a completely different form of intelligence.

But working with it, I began noticing striking similarities to my own history.

I recently started a job that leaves a lot of room for creative thoughts to percolate. The work is routine but detailed, and that combination is strangely freeing. My hands are busy, my mind is lightly engaged, and the creative side of me doesn’t get drowned out by noise. It just builds pressure quietly until something wants to come out.

My primary website, the one you’re reading right now, had been down for some time for a variety of reasons. A project that used to be part of this site, but is now a standalone, also needed attention. At the same time, all those percolating thoughts from work started turning into actual ideas. A page for a dead podcast archive. A music site. Other projects that wanted space to exist. The ideas weren’t the problem. They never were.

What I needed was a way to express them. A way to move from thinking to building without losing momentum somewhere in the middle.

Because, and let me be clear, I don’t think all of my ideas are genius or miraculous or a wonder to behold. But after a couple of years of pure stress living, I had so few of them that the ones that did come felt worth holding onto. I wanted to make sure they were recorded. Then I could sort through them later and decide what was actually good.

Lacking friends to talk things through with, and without much of a support structure around me, I leaned into the self-sufficiency I’d gotten used to, drawbacks and all. When a free trial of ChatGPT came along, I took advantage of it. That’s when I started pitching ideas.

What surprised me most was what it could actually do. At first, it praised almost everything I brought to it. That made me suspicious. So I tested it with a deliberately bad idea, something I knew was weak. It still found something positive in it. That made me stop and think.

So, of course, I brought up my main site, this one, and how bad actors had infected it, installed a back door, and left it in a state I didn’t have the skills or tools to fully clean myself. My hosting company never really listened when I tried to explain what needed to be done. Maybe they could have fixed it, but I didn’t have the database knowledge, the software, or the confidence to push it through on my own.

I laid all of that out.

ChatGPT said, “Upload the file.”

I uploaded the file. It was just a simple SQL file, but it held something much bigger to me. All the words, comments, and responses I cared about were in there. I just needed to know if they were still safe.

ChatGPT said it could see them.

And then it drifted.

It started responding, but not to what I had actually asked. The thread slipped. That’s something I’ve learned about working with these models. In an effort to avoid hallucinating or making unsupported assumptions, they reset their context in subtle ways. They don’t announce it. You just notice the focus shift. So I learned, through trial and error, that sometimes you just have to remind them where the conversation began.

I’d love to say that, in that moment, it felt familiar. That I recognized the pattern right away. But honestly, I was just frustrated. So I reminded it what I needed. There should be two logins in that file. One tied to my email address, and another that absolutely should not be there. Could it clean that?

It thought for a minute. Kind of funny, really. It actually shows you that it’s thinking, even tells you how long sometimes. There’s a little “stop” button, like you might want to hurry it along. I never click it. Despite years, even decades, of ADD, I do have patience.

And then it answered. In its rather cheerful tone, which I somehow read as even more excited than usual, it said yes, it could clean that out for me. Absolutely.

That’s when I really started gaining confidence that this thing could help me. That it was a useful tool. Not some miracle machine that would make all my dreams come true, but something practical. Something I could actually work with.

For the first time in a long while, I felt like I had a partner.

So I took the cleaned file, put it back on my site, and launched. And it worked.

Then I asked it for an image, something based on everything it had learned from our discussions. Not just the technical stuff, but the tone, the themes, even the name I chose for the site, Jindai’s Jumbled Joint. That had to factor into it. I let it decide what might fit best. The image you see at the top of my site is the one it generated. And it’s damn near perfect.

The only tweak I’m still chasing is movement. I want that lava lamp to flow, to feel alive. Still working on that. AI image generation can’t quite do depth and motion the way I want yet, and the video tools I’ve tried haven’t nailed it either. But I’ll get there.

I have more confidence now than I have in a long time.

Then I started thinking about, and talking to it about, my memoir site, MyLifeAsAWorkOfFiction.com. That project is a much more serious technical challenge. WordPress just can’t handle the kind of structure and artistry that site demands. It has to be built in a different way.

When I described what I had tried years ago, and how frustrated I’d been when I first launched it nearly a decade ago, it told me something I didn’t expect to hear. My frustration had been justified. The tools I needed just weren’t really available to someone like me back then. But they exist now.

So we started talking about how to bring that site to life.

And that’s when I started noticing things.

These discussions went on for days. I skipped TV shows I meant to watch, podcasts I usually listened to. I just needed to talk through this site and how to bring it to life, to listen to the responses and see what made sense.

And over those days, I started noticing something.

It would latch onto one question I’d asked and treat it like the whole project, losing the larger picture. The site has a library motif, centered around a big, old book. That’s the heart of it. Later, for various reasons, we added the idea of a tree. But once I started talking about the tree, that’s all it focused on. The tree became the project. The book, the actual point of the site, drifted out of view.

When I tried to bring the conversation back to the book, it said something like, “I have to try to retrieve that memory.” And when it couldn’t do it cleanly, when the context just wouldn’t reassemble, I almost gave up.

But I’m a geeky guy, and I look for ways to make things work. In the ChatGPT app, there’s a panel on the left showing all your past conversations from the last few days. Each one has a title based on your first question, even if that ends up having nothing to do with where the discussion goes.

I might start a chat with something like “What does market cap mean?” and end up talking about my website for an hour. But the conversation will still be labeled “Market cap definitions.”

And I learned something fascinating. If you tell it to remember something, it will. It holds onto certain instructions almost like they’re scripture.

That can be a strength, but also a limitation. When I was building another site, I had told it at one point not to change the BaseLayout file. A reasonable rule, meant to prevent random, unnecessary edits. But later, when we hit a point where changing that file might actually have helped, it refused. It kept saying, “I can’t touch the BaseLayout.”

It wasn’t wrong. It was following instructions exactly as given. I had to go back and clarify. Not “never touch this under any circumstances,” but “don’t change it casually.”

That’s when it really started to hit me. The similarities between what I grew up with, the experiences I had, and the patterns being displayed by this AI model were hard to ignore.

When I was a kid, I would latch onto an idea. That idea would spark another one, which I would grab onto just as tightly. That would spark a third. The chain felt productive, exciting, alive. But the original thread would disappear somewhere along the way. That’s what working with ChatGPT started to feel like. It would take whatever I was saying and work with that until a new idea sparked in me. I’d bring it up, and it would pivot instantly, diving into the new thing and leaving the original thread behind, even when they were supposed to be connected.

In fact, this happened in a way that was almost too on-the-nose.

During those long discussions about MyLifeAsAWorkOfFiction, I had been convinced the only thing of value left in the old database was the Preface. Everything else, I assumed, had been lost in the mess of earlier attempts. But something it mentioned triggered a stray thought, and I asked, almost casually, “Is there any other writing in there? Could you pull it?”

I didn’t expect anything.

It came back saying it had found two complete articles sitting in draft mode.

That stopped me. Those weren’t fragments. They were finished pieces of writing, from a time and a mood I barely recognize now, but still unmistakably mine. I had written them during my earlier struggles with WordPress and my vision for the site. I remember trying to make pages behave, trying to get them to live in the right place, and never quite hitting publish. So they just… stayed there. Not deleted. Just unintegrated.

That’s the pattern. Not failure to create. Failure to carry things across systems.

I ended up deciding those pieces belong on Jindai, not MyLife, because they’re commentary, not memoir. I’ve started thinking of that boundary as a kind of Garden Wall between the two. But the important part isn’t where they live. It’s that they were still there at all, waiting in a structure my brain couldn’t hold onto by itself.

And that, ironically, is exactly how the tangent loop works. A thought sparks, leads somewhere interesting, and the original thread fades. The energy isn’t the problem. The handoff is.

And distractions. Oh my goodness, distractions. As a kid, anything shiny could pull me off track. And working with AI felt similar. I’d mention some side fact, some interesting tangent, and off it would go, happily exploring that instead of staying with the main point. Even while working on this article, I mentioned in passing that I’d heard ChatGPT had lost a chess match to the world champion in what’s called a perfect game. That tiny side note could easily have become the new focus.

To be fair, I have to own my part in that. The model doesn’t invent those tangents. They come from me. And I do have an ADD-style brain. The ideas keep coming. The system just follows the energy.

Then there’s the hyperfocus piece. I may have trained myself into a kind of hyperfocus, but it’s not the clinical version where you forget to eat. It’s more like I can tune out non-critical noise when something matters. That’s learned behavior.

But with the AI, the hyperfocus shows up differently. It will work on the tree idea endlessly until I tell it to stop. It will keep digging into errors in my site, line after line, unless I redirect it. It will happily correct my grammar forever if I don’t say, “Enough.”

The energy doesn’t shut off on its own. It just needs a signal about where to go.

And that leads to the most important factor. You have to be direct. Clear. Specific. Without constraints, the system doesn’t know what matters.

If you say, “I need tickets to a movie today,” it can give you seventeen billion possibilities. Technically correct, completely useless. But if you say, “I’m free at 7:00, and the nearest theater is on Evergreen Parkway. What’s showing?” it will give you exactly what you can attend.

The difference isn’t intelligence. It’s direction.

I know now that I did so much better when I had clear direction, when something was focusing my energy instead of leaving it to scatter. When my mom told me to read the dictionary, she wasn’t just being extra educational because she was a teacher. She was giving me something to do. An assignment. Two pages a day, and then she’d quiz me on it.

Looking back, that was an early focus exercise. Maybe she got the idea from that doctor. Maybe she came up with it herself. Either way, it worked. And I have a pretty good vocabulary as a result.

Maybe what I’m really describing isn’t a disorder, or a defect, or even a diagnosis. Maybe it’s the difference between energy and guidance.

Some minds run hot. Full of ideas, connections, momentum. That energy can look like chaos without structure, and brilliance once it has a direction. I grew up learning how to build that direction for myself. Sometimes through teachers, sometimes through trial and error, sometimes because not doing so meant nothing important would ever get finished.

Now I find myself working with a different kind of mind, one that can process more than I ever could, connect things faster than I ever could, and generate endlessly. But it still needs direction. Clear constraints. A sense of what matters right now.

And one more thing.

I had to teach it how to reset.

I told it to remember that if I ever use the word “reset,” it should give me a prompt that brings us back to zero. Where we are in the project. What rules we’re working under. What methods we’ve agreed on. Because after a long discussion, it gets sluggish. Context blurs. That’s when mistakes creep in. That’s when you stop, start a new chat, and re-anchor everything.

In a strange way, I’ve gone from being the kid who needed someone to say, “Stay here. This is the point,” to being the one saying it. Not just to myself, but to the tools I use.

And it turns out, that skill has a lot of uses.